About Hide-Away Storage

Hide-Away Storage has been a leader on Florida’s West Coast in the self-storage industry for 45 years, for both household goods self-storage and commercial storage. Hide-Away now serves Spring Hill, Zephyrhills, Tampa, Brandon, Riverview, Parrish, Ellenton, Palmetto, Bradenton, Anna Maria Island, Longboat Key, Sarasota and Lakewood Ranch, Nokomis, Punta Gorda, Cape Coral, Fort Myers, Bonita Springs and Naples. New locations also are opening in North Port and Cape Coral.

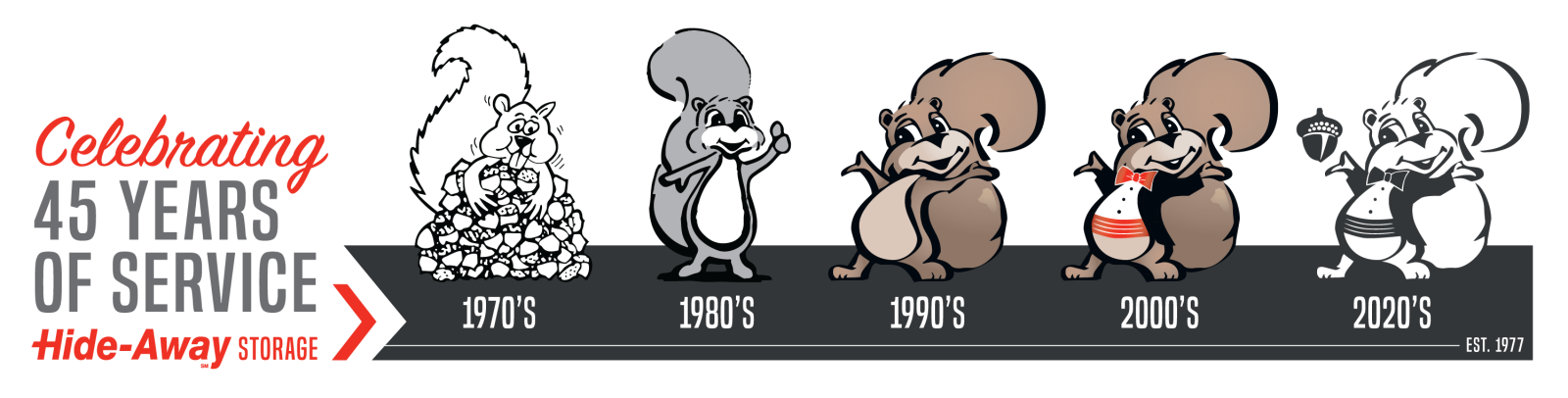

Our History

Bradenton residents Steve and Maureen Wilson began as pioneers in the self-storage industry, opening their first facility in Bradenton at 32nd Street & Cortez Road in September 1977. The Wilsons believe it’s been clear that God has continued to bless the company and He has allowed Hide-Away to pursue with increasing vigor its purpose of serving its customers, employees, and the community with excellence. “I’ve no doubt that He will continue to keep His good hand on Hide-Away. And one day we will be seen as one of the country’s leading innovators in a new generation of self-storage that is aimed – as our company purpose statement declares - at 'serving our customers with excellence'," says Steve Wilson.

Our Growth

Since its beginning Hide-Away has expanded to serve Florida’s West Coast from Springhill in the north to Naples in the south. In 2016, Hide-Away associated with National Storage Affiliates which has provided the capital for it to acquire facilities in Tampa, Brandon, Zephyrhills, Spring Hill, Riverview, Ruskin, Parrish, Palmetto and Punta Gorda, in addition to its home markets of Bradenton, Sarasota, St. Petersburg, Ellenton, Naples and Ft. Myers. There are now more than 26 self-storage and portable storage facilities in the Hide-Away network.

Community Involvement

Hide-Away Storage is committed to Adding Value To Our Community. Our team members are encouraged to be personally involved in their local communities and actively support a variety of non-profit organizations.

Hide-Away Storage has also provided direct financial support in addition to free and discounted storage to hundreds of local non-profit and community organizations over its 40-year history.